U0206584

Vidhya Ganesan

ROBOTS IN MINING

“In the ten years between 1988 and 1998, 256 miners died and over 64,000 were injured in mining accidents!”

“World metal prices have been falling for decades due to increases in efficiency. If a mine is unable to become more productive, it will go out of business!”

Yes! The vision of robotic industries, science fiction only a few years ago, is poised to become reality in the global mining sector, driven by the twin needs for safety and efficiency.

CSIRO's deputy chief executive for minerals and energy, Dr Bruce Hobbs says research teams at CSIRO are trialling and developing a range of giant robotic mining devices, that will either operate themselves under human supervision or else be "driven" by a miner, in both cases from a safe, remote location. “It is all about getting people out of hazardous environments," he says.[1]

Robots will be doing jobs like laying explosives, going underground after blasting to stabilize a mine roof or mining in areas where it is impossible for humans to work or even survive. Some existing examples of mining automation include

· The world's largest "robot", a 3500 tonne coal dragline featuring automated loading and unloading

· A robot device for drilling and bolting mine roofs to stabilize them after blasting

· A pilotless burrowing machine for mining in flooded gravels and sands underground, where human operators cannot go

· A robotic drilling and blasting device for inducing controlled caving.

Robots must demonstrate efficiency gains or cost savings. The biggest robot of them all, the automated dragline swing has the potential to save the coal mining industry around $280 million a year by giving a four per cent efficiency gain. Major production trials of this robot are planned for later in the year 2000.

Unlike their counterparts commonly found in the manufacturing industry, mining robots have to be smart. They need to sense their world, just like humans.

"Mining robots need sensors to measure the three dimensional structure of everything around them. As well as sight, robots must know where they are placed geographically within the minesite in real time and online," says Dr Corke. "CSIRO is developing vision systems for robots using cameras and laser devices to make maps of everything around the machine quickly and accurately, as it moves and works in its ever-changing environment," he says.

Dr Corke insists that the move to robots will not eliminate human miners, but it will change their job description from arduous and hazardous ones to safe and intellectual ones.

The Technology :

Example 1: RecoverBot [2] (used in mine rescue operations) , a one hundred and fifty pound tethered rectangular unit, has two maneuverable arms with grippers and four wheels that support an open box frame with power units, controllers and video cameras separately built with their own individual metal armor. Lowered down the target shaft to prepare a recovery, the telerobotic eyes "see" for the surface controller and the arms move the body into a second lowered net by lifting and dragging. An "aero shell" protects the robot during the lowering operation from a winch to protect from falling debris, and then removed when bottom is reached. Then RecoverBot performs it’s mission, observed from two points of view-the overhead camera used by current mine rescue to image deep shafts-and the robot, who’s video are the mine rescuer’s second view. When the mission is completed the robot is then raised to the surface after the victim and overhead camera is withdrawn.

Example 2: Groundhog [3], a 1,600-pound mine-mapping robot created by graduate students in Carnegie Mellon's Mobile Robot Development class, made a successful trial run into an abandoned coalmine near Burgettstown, Pa. The four-wheeled, ATV-sized robot used laser rangefinders to create an accurate map of about 100 feet of the mine, which had been filled with water since the 1920s.

a 1,600-pound mine-mapping robot created by graduate students in Carnegie Mellon's Mobile Robot Development class, made a successful trial run into an abandoned coalmine near Burgettstown, Pa. The four-wheeled, ATV-sized robot used laser rangefinders to create an accurate map of about 100 feet of the mine, which had been filled with water since the 1920s.

To fulfill its missions, the robot needs perception technology to build maps from sensor  data and it must be able to operate autonomously to make decisions about where to go, how to get there, and more important, how to return. Locomotion technology is vital because of the unevenness of floors in abandoned mines. The robot also must contain computer interfaces enabling people to view the results of its explorations and use the maps it develops.The robot incorporates a key technology developed at Carnegie Mellon called Simultaneous Localization and Mapping (SLAM). It enables robots to create maps in real time as they explore an area for the first time. The technology, developed by Associate Professor Sebastian Thrun of the Center for Automated Learning and Discovery, can be applied both indoors and out.

data and it must be able to operate autonomously to make decisions about where to go, how to get there, and more important, how to return. Locomotion technology is vital because of the unevenness of floors in abandoned mines. The robot also must contain computer interfaces enabling people to view the results of its explorations and use the maps it develops.The robot incorporates a key technology developed at Carnegie Mellon called Simultaneous Localization and Mapping (SLAM). It enables robots to create maps in real time as they explore an area for the first time. The technology, developed by Associate Professor Sebastian Thrun of the Center for Automated Learning and Discovery, can be applied both indoors and out.

"Mining can be a hazardous job. Getting robots to do the job will make mining safer and ensure the long-term viability of the industry".

References:

[1] http://www.spacedaily.com/news/robot-00g.html

[2] http://www.usmra.com/MOLEUltraLight/MOLEUltrLight.htm

[3] http://www.cmu.edu/cmnews/extra/2002/021031_groundhog.html

u0205159 Du Xing

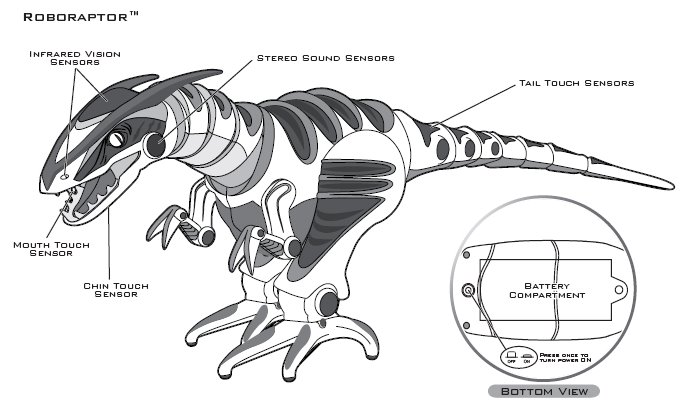

The Roboraptor measures about 80cm from head to tail and comes to life with realistic motions and advanced artificial intelligence. It has 40+ pre-programmed functions and comes with dinosauresque advanced artificial intelligent personality, realistic biomorphic motions, direct control and autonomous (free-roam) modes. It has three fluid bi-pedal motion: walking, running and predatory gaits, and comes with realistic biomorphic body movements such as turning head and neck and whipping tail actions. It even has three distinct moods! namely hunter, cautious and playful.

It is able to autonomously interact with the environment, such as responding with mood specific behaviours and sounds. It also has mood dependent behaviour, and multi-sensors on its tail, chin, mouth touch sensors and head sonic sensors that allows it to responds to touch and sounds. With an infra-red vision system detects objects in path, or approaching. It has powerful jaws that play tug-of war games, “bite” and pull, with “laser” tracking technology: trace a path on the ground and Roboraptor will follow it. visual and sonic guard mode. It even responds to commands from Robosapien V2.

Roboraptor will start to explore his environment autonomously in Free-Roam Mode if left alone for more than three minutes. While Roboraptor is in Free-Roam Mode he will avoid obstacles using his Infrared Vision Sensors. Occasionally he will stop moving to see if he can hear any sharp, loud sounds. After 5 to 10 minutes of exploration Roborapto will power down. We can also put the Roboraptor into Guard Mode. Roboraptor will perform a head rotation to confirm that he is in Guard Mode. In Guard Mode Roboraptor™ is using his Infrared Vision Sensors and Stereo Sound Sensors to guard the area immediately around him. If he hears a sound or sees movement he will react with a roar and become animated. Occasionally Roboraptor will turn his head and sniff.

Roboraptor has Infrared Vision Sensors that enable him to detect movement to either side of him. However infrared functions can be affected by bright sunlight, fluorescent and electronically dimmed lighting. Upon activation Roboraptor will be sensitive to sound, vision and touch. If you trigger the Vision Sensor on one side more than three times in a row, Roboraptor will get frustrated and will turn away from you. This will also happen if you leave him standing with his head facing a wall. Roboraptor uses his Vision Sensors to avoid obstacles while wandering around. While walking he will not be able to detect movement so he will react to you as if you are an obstacle.

Roboraptor can be guided around using “Laser” targeting. A green Targeting Assist Light from the remote control will make the Roboraptor move towards the light. Roboraptor’s Infrared Vision System and the “laser” targeting are based on reflection. This means that he can see highly reflective surfaces like white walls or mirrors more easily and at greater distances. Roboraptor also walks best on smooth surfaces.

With its stereo sound sensors, the Roboraptor can detect sharp, loud sounds (like a clap) to his left, his right and directly ahead. He only listens when he is not moving or making a noise. In Hunting Mood when he hears a sharp sound to his side he will turn his head to look at the source. If he hears another sharp sound from the same direction he will turn his body towards the source. If he hears a sharp sound directly in front of him he will take a few steps toward the source; In Cautious Mood, when he hears a sharp sound to his side he will turn his head to look at the source. If he hears a sound straight ahead he will walk away from it; In Playful Mood When he hears a sharp sound to his side he will turn his head to look at the source. If he hears a sound straight ahead, he will take a few steps backward, then take a few steps forward.

Roboraptor has multiple touch sensors which allow him to explore his environment and respond to human interaction. If you press the sensors on Roborapto’s tail , the Tail Touch Sensors with produce reaction varies depending on his mood; Pressing the sensor under Roboraptor’s chin activates the Chin Touch Sensor, which also produce reaction depending on his mood. There is a also a mouth touch sensor on the roof of Roboraptor’s mouth. In Hunting Mood, touching this sensor will trigger a biting and tearing animation. In Cautious and Playful Moods, Roboraptor will play a tug-of-war with whatever is in his mouth.

u0205159 Du Xing

The Roboraptor measures about 80cm from head to tail and comes to life with realistic motions and advanced artificial intelligence. It has 40+ pre-programmed functions and comes with dinosauresque advanced artificial intelligent personality, realistic biomorphic motions, direct control and autonomous (free-roam) modes. It has three fluid bi-pedal motion: walking, running and predatory gaits, and comes with realistic biomorphic body movements such as turning head and neck and whipping tail actions. It even has three distinct moods! namely hunter, cautious and playful.

It is able to autonomously interact with the environment, such as responding with mood specific behaviours and sounds. It also has mood dependent behaviour, and multi-sensors on its tail, chin, mouth touch sensors and head sonic sensors that allows it to responds to touch and sounds. With an infra-red vision system detects objects in path, or approaching. It has powerful jaws that play tug-of war games, “bite” and pull, with “laser” tracking technology: trace a path on the ground and Roboraptor will follow it. visual and sonic guard mode. It even responds to commands from Robosapien V2.

Roboraptor will start to explore his environment autonomously in Free-Roam Mode if left alone for more than three minutes. While Roboraptor is in Free-Roam Mode he will avoid obstacles using his Infrared Vision Sensors. Occasionally he will stop moving to see if he can hear any sharp, loud sounds. After 5 to 10 minutes of exploration Roborapto will power down. We can also put the Roboraptor into Guard Mode. Roboraptor will perform a head rotation to confirm that he is in Guard Mode. In Guard Mode Roboraptor™ is using his Infrared Vision Sensors and Stereo Sound Sensors to guard the area immediately around him. If he hears a sound or sees movement he will react with a roar and become animated. Occasionally Roboraptor will turn his head and sniff.

Roboraptor has Infrared Vision Sensors that enable him to detect movement to either side of him. However infrared functions can be affected by bright sunlight, fluorescent and electronically dimmed lighting. Upon activation Roboraptor will be sensitive to sound, vision and touch. If you trigger the Vision Sensor on one side more than three times in a row, Roboraptor will get frustrated and will turn away from you. This will also happen if you leave him standing with his head facing a wall. Roboraptor uses his Vision Sensors to avoid obstacles while wandering around. While walking he will not be able to detect movement so he will react to you as if you are an obstacle.

Roboraptor can be guided around using “Laser” targeting. A green Targeting Assist Light from the remote control will make the Roboraptor move towards the light. Roboraptor’s Infrared Vision System and the “laser” targeting are based on reflection. This means that he can see highly reflective surfaces like white walls or mirrors more easily and at greater distances. Roboraptor also walks best on smooth surfaces.

With its stereo sound sensors, the Roboraptor can detect sharp, loud sounds (like a clap) to his left, his right and directly ahead. He only listens when he is not moving or making a noise. In Hunting Mood when he hears a sharp sound to his side he will turn his head to look at the source. If he hears another sharp sound from the same direction he will turn his body towards the source. If he hears a sharp sound directly in front of him he will take a few steps toward the source; In Cautious Mood, when he hears a sharp sound to his side he will turn his head to look at the source. If he hears a sound straight ahead he will walk away from it; In Playful Mood When he hears a sharp sound to his side he will turn his head to look at the source. If he hears a sound straight ahead, he will take a few steps backward, then take a few steps forward.

Roboraptor has multiple touch sensors which allow him to explore his environment and respond to human interaction. If you press the sensors on Roborapto’s tail , the Tail Touch Sensors with produce reaction varies depending on his mood; Pressing the sensor under Roboraptor’s chin activates the Chin Touch Sensor, which also produce reaction depending on his mood. There is a also a mouth touch sensor on the roof of Roboraptor’s mouth. In Hunting Mood, touching this sensor will trigger a biting and tearing animation. In Cautious and Playful Moods, Roboraptor will play a tug-of-war with whatever is in his mouth.

You might wonder how we can control the Roboraptor’s Moods, it is done with a button on the remote control. Hunting Mood is the default mood that Roboraptor is in

when turned on. It can also be set in the playful mood or cautious Mood. As mentioned above, the moods determine the way Roboraptor reacts to some of his sensors. In Playful Mood Roboraptor will nuzzle your hand if you approach from the side. In Cautious Mood, Roboraptor will turn his head away from movement to the side.

In Hunting Mood, his reactions are much less friendly.

Technology: biomorphic robotics

Biomorphic robotics is a subdicipline of robotics focused upon emulating the mechaninc, sensor systems, computing structures and methodologies used by animals. In short, it is building robots inspired by the principles of biological systems.

One of the most prominent researchers in the field of biomorphic robotics has been Mark W. Tilden, who is the designer of Robosapien series of toys.One of the more prolific annual Biomorphic conferences is at the Neuromorphic Engineering Workshop. These academics meet from all around the world to share their research in what they call a field of engineering that is based on the design and fabrication of artificial neural systems, such as vision chips, head-eye systems, and roving robots, whose architecture and design principles are based on those of biological nervous systems.

There is another subdiscipline is neuromorphic which focus on the control and sensor systems)while biomorhpics focus on the whole system.

http://www.roboraptoronline.com/

Other toys by wow wee and Mark Tilden: Robosapien and Robopet.

You might wonder how we can control the Roboraptor’s Moods, it is done with a button on the remote control. Hunting Mood is the default mood that Roboraptor is in

when turned on. It can also be set in the playful mood or cautious Mood. As mentioned above, the moods determine the way Roboraptor reacts to some of his sensors. In Playful Mood Roboraptor will nuzzle your hand if you approach from the side. In Cautious Mood, Roboraptor will turn his head away from movement to the side.

In Hunting Mood, his reactions are much less friendly.

Technology: biomorphic robotics

Biomorphic robotics is a subdicipline of robotics focused upon emulating the mechaninc, sensor systems, computing structures and methodologies used by animals. In short, it is building robots inspired by the principles of biological systems.

One of the most prominent researchers in the field of biomorphic robotics has been Mark W. Tilden, who is the designer of Robosapien series of toys.One of the more prolific annual Biomorphic conferences is at the Neuromorphic Engineering Workshop. These academics meet from all around the world to share their research in what they call a field of engineering that is based on the design and fabrication of artificial neural systems, such as vision chips, head-eye systems, and roving robots, whose architecture and design principles are based on those of biological nervous systems.

There is another subdiscipline is neuromorphic which focus on the control and sensor systems)while biomorhpics focus on the whole system.

http://www.roboraptoronline.com/

Other toys by wow wee and Mark Tilden: Robosapien and Robopet.

The success of RoboWalker will see the change in lives of many disabled and elderly people. It will definitely replace the wheelchair as the preferred means of locomotion for these people. Not only will this innovation greatly reduce the inconvenience brought to people suffering from weakness in lower extremities, such breakthrough in technology would also bring about a huge cost savings to social welfare system, where the needs for modifications to improve mobility (wheelchair-friendly houses, stair lifts, car lifts, home aids etc) would be reduced.

The success of RoboWalker will see the change in lives of many disabled and elderly people. It will definitely replace the wheelchair as the preferred means of locomotion for these people. Not only will this innovation greatly reduce the inconvenience brought to people suffering from weakness in lower extremities, such breakthrough in technology would also bring about a huge cost savings to social welfare system, where the needs for modifications to improve mobility (wheelchair-friendly houses, stair lifts, car lifts, home aids etc) would be reduced.